SkillsBench Review

What we can learn from the first benchmark to treat Agent Skills as first-class artifacts

1. What Problem SkillsBench Solves

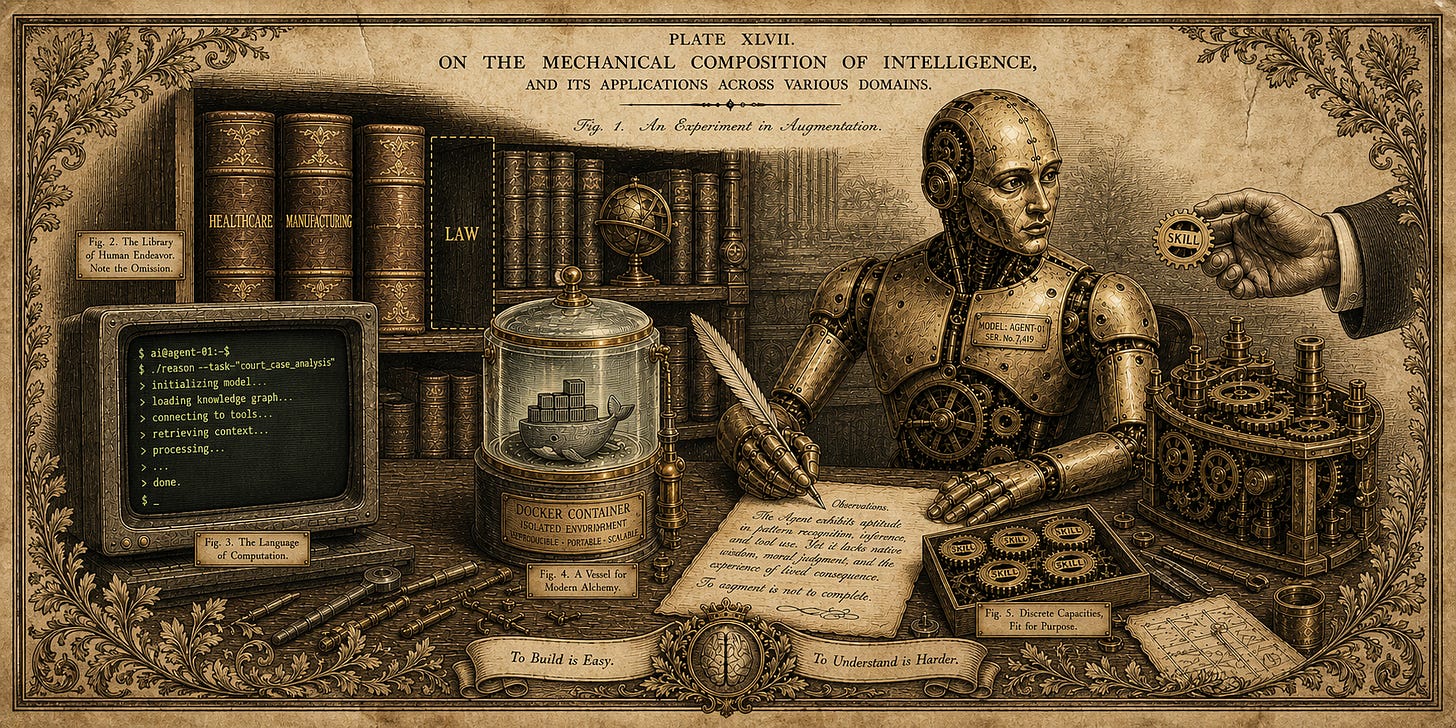

Foundation models lack the procedural knowledge that makes professionals effective. Fine-tuning is expensive and prompting alone is brittle. Agent Skills offer a modular alternative. They are structured packages of instructions, code templates, and resources that augment model behavior at inference time without modifying the model itself. The Skills ecosystem has grown rapidly, with community repositories now hosting tens of thousands of Skills spanning software engineering, UI/UX design, and enterprise workflows. Yet until recently, no benchmark systematically asked: do Skills actually help?

SkillsBench (Li et al., 2026) fills this gap. It evaluates 84 tasks across 11 domains, each executed under three conditions: no Skills, curated Skills, and self-generated Skills. Across 7 model-harness configurations and 7,308 trajectories, the headline finding is clear: curated Skills improve resolution rates by +16.2 percentage points on average. The benefit is largest in domains requiring specialized procedural knowledge (Healthcare +51.9pp, Manufacturing +41.9pp). Self-generated Skills, by contrast, provide negligible or negative benefit (−1.3pp), confirming that effective Skills require human domain expertise and models cannot reliably produce this knowledge on their own.

These findings provide valuable signals. However, the true utility of a benchmark lies not only in its results but also in the accuracy of what those results represent. After a thorough examination of the paper, its appendices, and the open-source repository, we conclude that while SkillsBench is a significant methodological advancement, its design choices inherently limit its ability to reflect real-world Skill development and usage.

2. The Good Parts

Before offering our critique, we should acknowledge what SkillsBench does well.

Paired evaluation is the right methodology. Running every task both with and without Skills—and measuring the difference—is a genuine innovation. No prior benchmark treats Skills as first-class evaluation artifacts. The three-condition design (none, curated, self-generated) isolates the Skill signal from general model capability, and the normalized gain metric, borrowed from physics education research (Hake, 1998), enables comparison across different baselines. This should become standard practice for anyone evaluating agent augmentation.

The tasks are human-authored and seriously vetted. Instead of opting for the convenience of synthetic or LLM-generated examples, SkillsBench required human-written task instructions, verified through AI-detection screening and human review. 105 contributors from academia and industry submitted 322 candidate tasks. Every submission underwent automated checks—ensuring required files were present, verifying the reference solution passed all tests, screening for AI-generated content—followed by multi-stage human review. Reviewers evaluated tasks against explicit criteria: data validity, task realism, solution quality, Skill quality, and anti-cheating robustness. The 26.7% acceptance rate (86 tasks from 322 submissions) indicates genuine selectivity.

The benchmark is community-driven, with meaningful credit for contributors. Contributors who get one task merged earn co-authorship on the paper. This is a serious incentive structure that treats community members as genuine partners rather than anonymous labor. The benchmark was built by people from Stanford, UC Berkeley, CMU, Columbia, Oxford, Princeton, and over a dozen other institutions, plus industry contributors from Amazon, ByteDance, and Foxconn. The CONTRIBUTING.md and MAINTAINER.md provide clear standards, and an active Discord channel lets contributors validate ideas before building. Tasks are packaged in Docker containers with public repositories, making them auditable and reproducible by anyone. This openness stands in contrast to proprietary, closed benchmarks that cannot be independently examined.

That said, the co-authorship incentive also shapes what kinds of tasks get built—a dynamic we explore below.

3. Limitations

SkillsBench’s strengths are real, but its design choices also create specific, consequential blind spots—particularly around what kinds of tasks are included and how success is measured.

3.1. Task Representativeness

The co-authorship incentive shaped the task distribution. Earning co-authorship on a paper with authors from Stanford, Berkeley, CMU, Columbia, Oxford, and Princeton is powerful currency for an academic, particularly a graduate student. For an industry practitioner in healthcare, manufacturing, or law, it is far less compelling.

The influence is visible in the task distribution. The benchmark’s repository shows Software Engineering dominating with 17 tasks, Natural Sciences with 10+, and Media & Content Production with 11. In contrast, Healthcare has 2 tasks (lab-unit-harmonization and protein-expression-analysis), Manufacturing has 3, and Legal has zero—despite being designated as priority domains in the contributor guide. The domains where the paper reports the largest Skills gains, such as Healthcare (+51.9pp) and Manufacturing (+41.9pp), rest on the smallest, least representative samples. A two-task domain cannot support general claims about Skills efficacy in that domain.

Tasks do not account for real-world frequency. Nowhere does the benchmark measure how common a task is in professional practice. A contributor with expertise in exoplanet transit detection can build the exoplanet-detection-period task. A contributor familiar with formal mathematics can build the lean4-proof task. These are intellectually interesting, but how often does a professional encounter them relative to, say, drafting a contract amendment or reconciling medication lists? In the real world, Skills are created for frequently performed workflows, since the upfront cost of writing procedural documentation only makes sense if the task recurs. By pairing every task with a Skill regardless of how common the task is, the benchmark measures an idealized scenario where the right Skill is always available. Real-world deployment requires finding the right Skill for the right task, which is a retrieval and matching problem the benchmark entirely sidesteps. Therefore, the +16.2pp average may be an upper bound.

Skills are purpose-built for their tasks, not curated from the wild. The benchmark’s accompanying paper reports an ecosystem analysis of 47,150 Skills aggregated from open-source repositories, the Claude Code ecosystem, and corporate partners. However, for the benchmark tasks themselves, contributors authored the Skills alongside their tasks. The repository’s CONTRIBUTING.md instructs contributors to create an environment/skills/ directory as part of their submission. Every Skill file we examined was committed to the repository by the task author. For example, the 13f-analyzer Skill for the sec-financial-report task provides a Python script for comparing hedge fund holdings “from 2025-q2 to 2025-q3,” which is precisely the quarters the task asks about. The Skill does not contain the answer, but it is clearly optimized for the specific task instance. This is simultaneously a strength (guaranteed relevance) and a limitation: it does not reflect the real-world scenario where an agent must retrieve and apply a Skill written by someone else for a related but not identical problem.

3.2. The Compounding Factor: Deterministic Verifiers

If the co-authorship incentive skewed who contributed, the deterministic verifier requirement skewed what they could contribute. The benchmark requires programmatic pytest assertions for pass/fail determination, explicitly avoiding LLM-as-a-judge evaluation for reproducibility. This choice, while principled, systematically excludes entire categories of tasks and the excluded categories overlap with the domains already underrepresented.

“If the co-authorship incentive skewed who contributed, the deterministic verifier requirement skewed what they could contribute.”

Deterministic verifiers work for tasks with objectively correct outputs: code that compiles, numerical calculations within tolerance, files that conform to a schema. They fail for tasks where quality is subjective, multidimensional, or context-dependent: open-ended analysis, creative production, judgment under uncertainty, interactive negotiations—any task where “correctness” exists on a spectrum. These are precisely the tasks that define high-skill professional work in law, medicine, management, and consulting. A legal expert who proposed a task to “assess the enforceability of a non-compete clause” would be rejected by the benchmark’s maintainers, not because it is a bad task, but because it cannot be reduced to a pytest assertion. The benchmark did not just fail to attract legal tasks; it made them structurally infeasible. The same barrier applies to clinical tasks like assessing whether a patient meets trial eligibility criteria, or managerial tasks like evaluating a vendor proposal against multiple competing priorities. These judgment-intensive workflows are where domain-specific Skills might deliver the greatest value. Yet they cannot appear in a benchmark that insists on purely deterministic verification.

Even within the narrower set of tasks that can be verified programmatically, the reliance on exact output matching creates its own distortions. The paper’s own failure analysis notes that “Specification Violation” failures drop sharply with Skills, hinting that some gains come from better output formatting rather than deeper procedural understanding. But is formatting compliance what anyone evaluating a Skills ecosystem should primarily care about?

Taken together, the incentive structure and the verifier requirement create a double filter. The benchmark attracts contributors who find co-authorship valuable (academics, particularly in CS-adjacent fields) and accepts tasks that produce verifiable outputs (computational problems with well-defined correct answers). The tasks that survive this double filter are not a representative sample of professional work. They are a sample of professional work that is both interesting to CS academics and amenable to automated testing. The benchmark’s headlining +16.2pp gain tells us something about Skills in a narrow, computation-friendly slice of the world. It tells us considerably less about Skills where they matter most.

4. Toward Better Evaluations of Agent Skills

The limitations we have described do not represent oversights; they reflect genuine trade-offs. Requiring deterministic verification ensures reproducibility. Incentivizing contributions with co-authorship attracts academic talent. Allowing contributors to author both task and Skill guarantees relevance. These are defensible choices for a first-of-its-kind benchmark. But future benchmarks for Skills can explore different points on these trade-off curves. Several directions are worth pursuing.

4.1. Independent Task and Skill Authorship

One person writes the task; a different person, blind to the full task specification, writes or selects the Skill based on an abstract description of the task type or a collection of example tasks. This would test whether Skills encode genuinely reusable procedural knowledge rather than task-specific guidance. The task author specifies the domain, the input artifacts, and the success criteria; the Skill author receives only a high-level description of the workflow (for example, “compare quarterly SEC 13F filings to identify changes in hedge fund positions”) and must write procedural guidance that would help an agent complete any task of that general type. This separation breaks the feedback loop that makes every Skill perfectly matched to its accompanying task, and it more closely resembles how Skills are authored and used in real ecosystems—written once for a class of problems, then applied to specific instances later.

4.2. Hybrid Verification

Deterministic verifiers remain appropriate for tasks with objective answers. But for the many important tasks where quality cannot be reduced to a pytest assertion, future benchmarks could draw on two complementary evaluation methods. The first is rubric-based LLM judges: a language model evaluates outputs against a detailed scoring rubric, with the rubric and judge calibrated against a sample of human ratings. This approach is relatively fast, scalable, and cost-effective, and it has been adopted successfully in benchmarks like HealthBench and TutorBench. The tradeoff is that LLM judges can introduce systematic biases, such as favoring certain output styles, inflating scores for the model family they belong to, or missing domain-specific nuances that a human expert would catch. The second is arena-style evaluation: a domain expert, or a panel of experts, compares two outputs side by side without knowing which was produced with Skills. The expert decides which is better, and the Skill’s contribution is measured by win rate. This approach directly captures professional judgment and is harder to game. The tradeoff is that it is slow, expensive, and logistically demanding. The two methods are not mutually exclusive. A benchmark could use LLM judges for rapid, large-scale scoring and periodically validate with human arena comparisons to catch drift and calibrate thresholds. The key principle is to match the evaluation method to the nature of the task rather than forcing every task into a deterministic mold.

4.3. Measuring Task Commonality

Future benchmarks could ask contributors to estimate how frequently a task occurs in professional practice and stratify results accordingly. If Skills show different efficacy on common versus rare tasks, current designs cannot detect it. This matters because a Skill that helps on a daily workflow is far more valuable than one that helps on a once-a-year edge case, even if both show the same percentage-point improvement. Commonality estimates could be validated against job posting analyses or workflow surveys, and the benchmark could report Skill efficacy separately for high-frequency and low-frequency task bands.

4.4. Rethinking Contributor Incentives

If future Skills benchmarks want tasks from practicing clinicians, lawyers, and manufacturers rather than just CS-adjacent academics, the incentive structure needs to align with what motivates those professionals. Three complementary approaches stand out. First, pay professionals at rates commensurate with their expertise. For example, GPQA paid its PhD-level domain experts roughly $95 per hour with bonuses tied to question quality. Second, leverage existing expert-curated datasets. Benchmarks like GDPVal and APEX have already invested in assembling domain experts to build tasks in medicine, law, consulting, and investment banking. Future Skills benchmarks could adapt or extend these datasets. Third, build enterprise partnerships where the incentive is influence rather than payment. Companies can be motivated to contribute tasks because they want AI labs to measure model capabilities on workflows their industries care about. This aligns benchmark coverage with genuine economic demand. Together, these three approaches would lead to a benchmark with a task distribution that better reflects professional practices and economic demand.

5. Conclusions

SkillsBench takes a necessary first step. It demonstrates that human-authored procedural knowledge can substantially improve agent performance, that models cannot reliably self-generate this knowledge, and that how Skills are designed matters. But the benchmark’s methodology has had a significant impact on who contributed, what they could contribute, and how success is measured, all of which limit what its results can tell us about Skills in the wild. The next generation of Skills benchmarks has an immense opportunity to improve upon its ecological validity by exploring alternate eval design choices as we have discussed above.